|

Code with agents (without breaking things)

Trusting your agentic tools frees you up to move WAY faster

Getting the most out of AI tools requires being able to actually trust them.

But you can’t just blindly trust AI tools to write code for you and ship it without issues. You might win sometimes, but you’ll eventually have a serious problem. It’s not responsible.

If you don’t want to end up doing something like deleting your production database in 9 seconds, this article (and newsletter!) is for you.

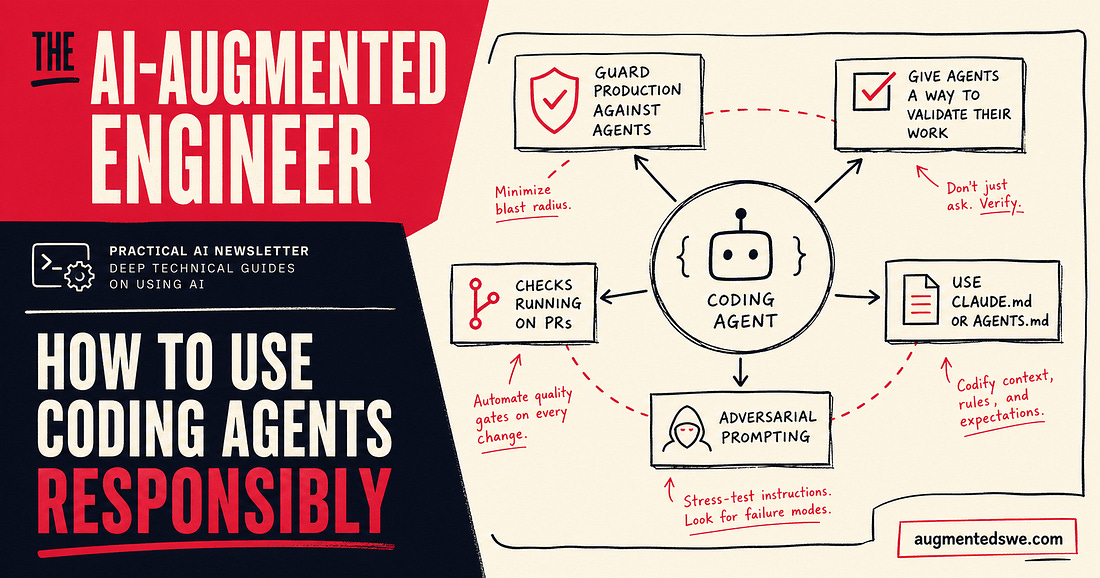

Guard production against agents

Your first job is containment.

Agents should not have direct access to production systems. No direct database credentials. No ability to run destructive commands.

If you don’t already follow these patterns, you should start:

Read-only replicas for exploration

Feature flags for risky changes

Strict environment separation

Scoped API keys with minimal permissions

If an agent can cause irreversible damage in one step, that’s not an AI problem. That’s a systems design problem.

Design your environment so the worst-case agent mistake is annoying, not catastrophic.

Give agents a way to validate their work

Most bad outcomes happen because the agent had no feedback loop.

You asked it to implement something. It wrote code. But you didn’t actually check if it actually worked.

You need to make validation the default path. At minimum you should have:

A test suite the agent can run and iterate on

Linters and type checks it can use as guardrails

Clear instructions like “do not stop until tests pass”

Agents are surprisingly good at self-correction when given fast feedback but without it, they’ll happily ship broken logic that looks very convincing.

If your repo doesn’t have good tests, agents will expose that immediately.

Almost every AI coding tool today also has browser use, so if you’re working on something with a web frontend, be sure to let your agent check out its work that way too.

Checks running on PRs

Never trust a single pass from an agent. Every change should go through the same pipeline in CI that contains tests, linters, static analysis, and security scans.

You should treat agent-generated code exactly like code from a new hire on their first week. Your new hire might be the most competent developer in your city, but they don’t have all the context you do.

Better yet, assume it’s slightly worse. No agent should be able to push directly to main, or bypass PR review, or Skip CI

Even if you’re a solo developer.

The moment you remove friction here, you remove your last line of defense. Agents are fast enough that a bad change can go from idea to production before you even realize what happened.

CLAUDE.md or Agents.md

Agents perform dramatically better when they have clear, persistent context.

Create a file in your repo that tells them how to behave, so you don’t have to repeat yourself between chat sessions. Give a roject architecture overview, requirements for coding standards and patterns, instructions run tests and common commands.

It’s also a good place for keeping things safe. Include guidelines for what not to touch and even known sharp edges in the codebase

Where you really see benefits is in updating this doc. When an agent makes a mistake or does something stupid, tell it to update the context file with instructions to never do it again.

Adversarial prompting

This is the closest thing you have to a second opinion. Before merging, open a fresh context window and ask an agent to review the changes. I find this works much better if you’re very explicit in your prompt

Does this do exactly <insert what you want it to do>?

What could break in production?

Giving it a persona if helps too. “Act like a grumpy cracked staff engineer reviewing a risky PR” is probably my favorite.

The important part is separation. A fresh context window avoids the model defending its own previous work. I always select a maximum reasoning model for this step too.

Thanks for being a paying subscriber to the newsletter - you rock! As always, if you have any feedback or topic requests, just leave a comment or hit reply!

Thank you for your support as a paid-member of The AI-Augmented Engineer! Your contribution enables me to continue writing quality content like this.