|

|

Hey friends 👋 Happy Sunday.

Here’s your weekly dose of AI and insight.

Every Wednesday, Signal Pro members get a step-by-step AI workflow they can apply immediately. No fluff, just practical guides to upskill you and your team. If you’re only reading the Sunday issue, you’re getting half the picture. Upgrade to paid today.

AI Highlights

My top-3 picks of AI news this week.

OpenAI

1. OpenAI Is Cooking

OpenAI packed four major launches into a single week, reasserting momentum on every surface after months of narrative pressure from Anthropic and Google.

ChatGPT Images 2: OpenAI released its next-gen image model with 2K resolution, legible text across non-Latin scripts, and a Thinking mode that can search the web mid-generation. It went straight to #1 across all Image Arena leaderboards, beating Google’s Nano Banana models with a +242 point lead in Text-to-Image, the largest gap ever recorded.

GPT-5.5: GPT-5.5 is OpenAI’s first fully retrained base model since GPT-4.5, built for multi-step work that needs planning, tool use, and self-checking. It scored 82.7% on Terminal-Bench 2.0 and can carry tasks through to completion, the real unlock for agent deployment.

Agents and clinicians: OpenAI also shipped workspace agents, Codex-powered shared agents for Business and Enterprise plans that plug into Slack, Salesforce, Notion, and Google Drive with persistent memory and role-based governance, plus ChatGPT for Clinicians, a free tool for verified US physicians, NPs, PAs, and pharmacists with cited medical sources and HIPAA options.

Alex’s take: Four launches in one week and the OpenAI team is absolutely cooking. Nano Banana 2 has been my daily driver for image generation over the last two months. Not anymore. GPT Image 2 is the first model where the outputs don't look AI-generated, as opposed to Gemini’s Nano Banana which has certain “tells” like typography, colouring, shadows, etc. I wrote about it this week. Meanwhile, Anthropic quietly pulled Claude Code from new Pro plans to ration compute, and Sam spent the week trolling them with "ok boomer" and "come to the light side". There’s a real vibe shift, Sam’s more playful, not taking things as seriously, and honestly, I’m here for it. More competition means better products for us as the consumer. Anthropic doesn't have enough compute, so they're picking battles and still haven’t shipped image gen. OpenAI has the capital to push on every front at once. I think it’s incredible how much can change in the space of a week in this market.

Anthropic

2. The Anthropic Sweep

Reach was the theme of Anthropic’s week. Claude showed up in new corners of the workplace, picked up a proper memory layer for developers building production agents, and landed in a new set of consumer apps outside of work.

Live artifacts in Cowork: Claude can now build live artifacts in Cowork. Dashboards and trackers stay connected to your apps and files, pulling in fresh data every time you reopen them, with early power users reporting they built full tools in minutes.

Memory for Managed Agents: Agents on the Claude Platform can now learn across sessions, with memories stored as editable files that developers can export, audit, version, and roll back via API, rather than buried in a black-box vector store.

Consumer connectors: Claude now plugs into fifteen new everyday apps, including Booking.com, Resy, Spotify, Audible, Instacart, AllTrails, Thumbtack, TurboTax, and Uber, taking the directory past 200 connectors.

Alex’s take: The memory update I’d say is the greatest one here. Storing agent memory as editable text files instead of a black-box vector store means developers can actually read, version, and audit what their agents remember. Perhaps the next great software moat goes beyond just raw model capability and looks at actually how well an agent can remember, update, and safely act on a team's accumulated context. Meanwhile, heavy users are still angry about Opus 4.7 burning through weekly limits. From Anthropic’s perspective (who are clearly compute constrained) this is a tough balance to find, especially as you have state of the art models people desperately want to use yet you don’t have the firepower to continuously subsidise users given the margins are so fine.

SpaceX

3. SpaceX Courts Cursor

SpaceX announced a deep partnership with Cursor, pairing Cursor’s product and distribution with SpaceX’s training compute to build the world’s best coding and knowledge work AI.

The option structure: SpaceX has the right to acquire Cursor later this year for $60 billion, or pay Cursor $10 billion for the collaborative work. Commentators on X flagged that the $10 billion floor is likely compute credits rather than cash, a subsidy with an exit attached.

Why Cursor needed this: Cursor’s own model Composer 2 was built on top of Moonshot’s Kimi, and community response to their model work had been lukewarm. A million H100 equivalents via Colossus gives them a real shot at training a frontier model, and slots into SpaceX’s broader push toward orbital data centres via scaled Starlink V3 satellites.

The AI lab meta: Kevin Kwok argues top coding labs now need to own both the model and the product to close the loop. Distribution without a model is a rental agreement, and every dev tool company becomes either a model company or a feature of one.

Alex’s take: The “middle ground” for AI products has evaporated. Cursor saw this first-hand when they built Composer 2 on top of Kimi, and this deal is the logical next step. A million H100 equivalents plus eventual orbital data centres gives them a real shot at training a frontier model, which is the only way to avoid becoming a feature inside a larger model provider. If the deal goes through, this will give Cursor the infrastructure to train world-class models that can compete with the likes of Anthropic and OpenAI. Whichever way it goes, they land somewhere.

|

Content I Enjoyed

Altman’s Antidote

Sam Altman recently took the stage in San Francisco to unveil World ID 4.0, the most ambitious iteration yet of his iris-scanning identity venture (formerly Worldcoin). In a world drowning in AI-generated content, we need “full-stack proof of human” infrastructure to tell real people apart from machines. Altman says we’re already heading there.

The integrations that were announced really bring this to life. Tinder is rolling out verified-human badges in the US. Zoom built “Deep Face,” which cross-checks three things on every call: the iris-scanned image from the original Orb verification, a live selfie on the participant’s device, and the video frame everyone else sees. DocuSign is attaching proof-of-human to digital signatures. Shopify, Okta, AWS, Vercel and Visa are all building on top. 18 million people across 160 countries have already scanned their irises at an Orb.

Sitting underneath the consumer announcements is AgentKit, a system that lets AI agents carry cryptographic proof they’re acting on behalf of a verified human. Vercel’s “human in the loop” workflow is already live, and Okta is planning a product called Human Principal that lets API builders enforce policies based on whether a real person stands behind an agent. Once autonomous agents start executing transactions at scale, they’ll need a way to prove a real person stands behind them. Altman wants World ID to be that rail, taking a toll on every bot-executed transaction made on a human’s behalf.

The obvious tension is that the person selling humanity’s verification layer runs the company that did more than anyone to contaminate the internet with synthetic content in the first place. And on a somewhat related front, Mythos—Anthropic’s model that was “too dangerous to release”—was accessed on day one by four people in a private Discord.

They guessed the endpoint URL from Anthropic’s naming conventions, worked the pattern out from a leak in the Mercor breach three weeks earlier, and used a contractor's legitimate evaluation credentials to log in. They have reportedly been using the supposedly world-ending model to build simple websites. Frontier AI security is still being held together with string.

Idea I Learned

What Was Anthropic Thinking?

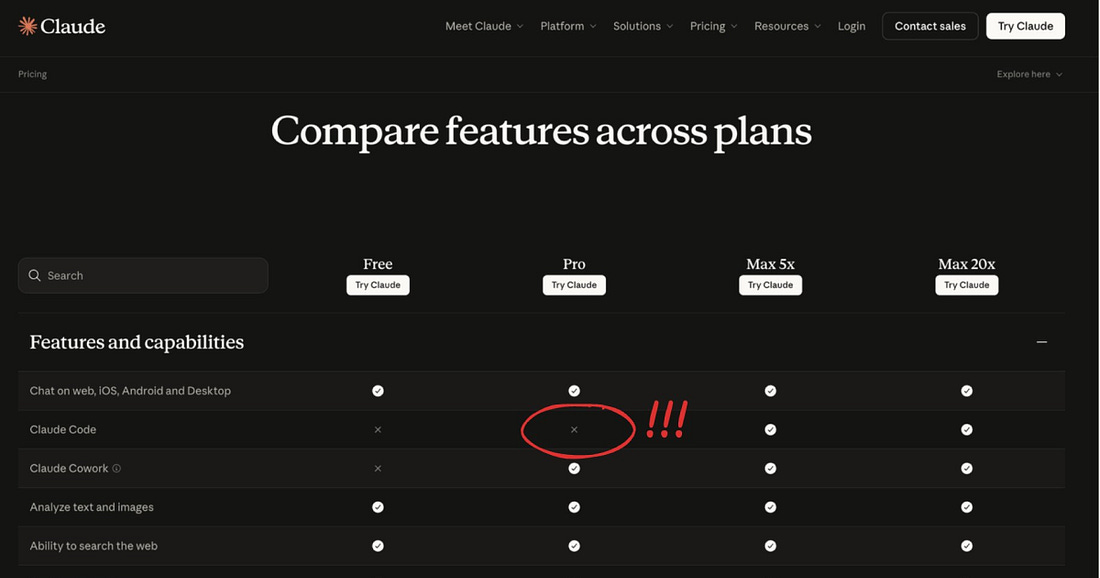

This week, Anthropic quietly removed Claude Code from its $20 Pro plan. The pricing page pushed agentic coding up to Max at $100/month as the new entry point. Gergely Orosz spotted the change and posted the updated page. Within hours, critics called it “borderline suicidal” for a company whose reputation is built on coding.

The economic logic makes sense, with inference costs for coding agents being severe, and reallocating compute from $20 users to higher-LTV customers improves unit economics overnight. Every major lab has a capacity problem, and rationing at the entry tier is one way to solve it.

Interestingly enough, Claude Code reappeared on the Pro pricing page within 24 hours. This suggests the change was a pricing elasticity test rather than a permanent cut, positioned as a “fake door” probe to see who would churn and who would pay up. But that creates its own problem. Anthropic markets safety and integrity as core product differentiators. A silent test on the tier where most developers begin their journey sits awkwardly against that positioning and continues to erode developer relations.

Hours later, OpenAI's Rohan Varma parodied the move by posting that OpenAI was running "a small test for ~100% of Codex users" with top models unlocked across every plan, free and paid, mocking Anthropic's fake-door language.

This is the vibe shift playing out. Whilst the company has continued to ship consistently, the last few months have been a slow accumulation of bruises for Anthropic’s brand. Opus 4.6 was quietly nerfed in February, and users called the company out publicly when response quality dropped.

Trust is supposed to be the moat, yet quietly downgrading models and then quietly testing whether developers will swallow a 5x price hike runs against the brand Anthropic has spent the last 3 years building. It lands in the week OpenAI has its cleanest counter-narrative in some time.

Quote to Share

Auguste Compt on the UK's Tech Town announcement:

The UK government named Barnsley its first official Tech Town back in February. £500,000 in seed funding over 18 months, with a brief to act as a national blueprint for AI across schools, the NHS, and local businesses. Even spread across the borough’s ~250,000 residents, the per-person budget works out to pennies per month.

Anthropic, on the other hand, are paying £630K as a base salary for a single Research Engineer in London. Stock pushes total comp close to a million pounds per engineer. The company is fitting out an 800-person King’s Cross office while OpenAI doubles its London headcount past 500.

Britain has two AI economies running in parallel. King’s Cross is a frontier lab cluster funded by US capital. Barnsley is a public service rollout coordinated with Microsoft, Cisco, Google, and Adobe, who supply the actual AI underneath.

I want this to work. Britain has every ingredient to be a serious AI player. But we’re in a world right now where one AI engineer’s salary is more than an entire town’s tech investment. What’s missing is policy ambition matched to the scale of what’s actually happening in King’s Cross.

Source: Auguste Compt on X

Question to Ponder

“I keep hearing that AI competitors are quietly using each other’s models and infrastructure to build their own products. Is this actually happening?”

Yes, and the evidence is quickly stacking up.

The Information reported this week that Google has assembled a strike team, co-led by returning Sergey Brin (Google Co-Founder) and DeepMind CTO Koray Kavukcuoglu, to close the gap on coding models. Brin told staff that every Gemini engineer must use internal agents for complex, multi-step tasks, and the eventual goal is what he calls “AI takeoff” with AI that can improve itself.

Steve Yegge’s follow-up post, corroborated by anonymous Googlers, highlights that DeepMind engineers reportedly use Claude as a daily tool, whereas most of the rest of Google does not. When leadership floated removing Claude across the board, DeepMind objected hard enough that some engineers threatened to walk.

This is a clear forcing function for engineers to build a better coding product. Also, paying for thousands of engineers to have enterprise access to a competitor’s tool is a tall ask. But that’s exactly the situation at the lab building Google’s frontier model.

This same pattern is repeating itself consistently across the ecosystem. Anthropic runs Claude on Google’s TPUs in a multi-year, multi-billion-dollar deal. AWS poured billions into Anthropic and built Kiro (competitor to Cursor and Claude Code) around Claude. Microsoft funded OpenAI, then opened GitHub Copilot to multiple models, Claude included.

If the people building these models reach for whichever tool works best (even a competitor’s), there’s no point as a consumer being loyal to just one.

Use what works, and make your workflows fluid between models. The labs are already doing this.

Already a subscriber? Get your whole team on board. Signal Pro group subscriptions give everyone access to weekly AI workflows and tutorials, practical upskilling that pays for itself. It’s the kind of thing L&D budgets were made for. Share this with your manager today.

|

💡 If you enjoyed this issue, share it with a friend.

See you next week,

Alex Banks