|

|

Hey friends 👋 Happy Sunday.

Here’s your weekly dose of AI and insight.

Every Wednesday, Signal Pro members get a step-by-step AI workflow they can apply immediately. No fluff, just practical guides to upskill you and your team. If you’re only reading the Sunday issue, you’re getting half the picture. Upgrade to Pro today.

AI Highlights

My top-3 picks of AI news this week.

1. Gemini Gets to Work

Google announced a coordinated wave of Gemini updates this week, headlined by global file generation that lets users generate ready-to-download Docs, Sheets, Slides, and PDFs without copying, pasting, or reformatting.

Files in chat: Gemini can now generate Docs, Sheets, Slides, PDFs, .docx, .xlsx, .csv and Markdown files directly inside the conversation, rolling out globally to free and paid users with no native PowerPoint export at launch.

UK Memories live: The Memories setting launched for UK users (on by default) letting Gemini retain preferences and key details across conversations, with controls in Personal context > Memory.

Import from rivals: A new switching tool accepts ZIP exports of full chat history from competing AI apps, including ChatGPT, plus a copy-paste flow that pulls memory summaries from rival assistants into Gemini.

Alex’s take: It’s interesting how late Google is to the file generation game. ChatGPT has been creating Excel and PowerPoint files from prompts since July 2025. Claude added file creation in September. Google is shipping the same capability in April 2026, 7-9 months behind their most fierce competitors. On the memory side, Google had cross-chat memory globally over a year ago, so the UK launch is regulatory catch-up. Over the past quarter Google has been frying the ocean: Personal Intelligence, a native Mac app, Notebooks, Lyria 3 Pro for music, browser agents, file generation, UK Memories. Meanwhile their last frontier model was Gemini 3.1 Pro back in February. In the same window, Anthropic shipped Opus 4.6 then 4.7, OpenAI moved through GPT-5.3 → 5.4 → 5.5. An idea I often reflect on is that Google’s structural advantage is the greatest in the entire AI market. It sits across Workspace, Search, Android, TPUs, free distribution at a scale no one else can touch. But their advantages don't compound on their own. Google has the long-term hand. They need to play it with more focus and velocity at the model layer, before a competitor's meaningfully smarter model eclipses all their integration work.

Anthropic

2. Claude’s Big Pull

Anthropic spent the week turning Claude into the hub for professional work, pulling nine creative apps into the chat and launching a new Enterprise security product within 48 hours.

Creative coalition: Anthropic launched nine connectors covering Adobe Creative Cloud (50+ tools), Blender, Autodesk Fusion, Ableton, Splice, Affinity by Canva, SketchUp, and Resolume, letting users debug 3D scenes, batch-edit assets, and orchestrate multi-app workflows directly inside Claude.

Claude Security: A new product, Claude Security, is now in public beta for Enterprise. Opus 4.7 scans codebases, validates findings to cut false positives, and proposes patches reviewers can approve in a single sitting.

Defender consortium: Six security platforms (CrowdStrike, Microsoft Security, Palo Alto Networks, SentinelOne, TrendAI, Wiz) and five consultancies (Accenture, BCG, Deloitte, Infosys, PwC) are embedding Opus 4.7 directly into the security stacks enterprises already run.

Alex’s take: Anthropic spent April flexing Claude Mythos's capabilities. It found 271 zero-day vulnerabilities in Firefox in a single sweep, nearly four times what Mozilla patched across all of 2025. Then, it locked the model behind Project Glasswing for around 50 critical-infrastructure partners. Claude Security is the generally-available counterpart, running on Opus 4.7 and shipping to every Enterprise customer. It’s also worth noting the “full-stack” fix that Anthropic is capable of here. Find with Claude Security, fix with Claude Code, ship through the same vendor. It’s the entire entire software lifecycle inside one company, and it's why Anthropic now holds 40% of enterprise LLM spend, 54% of enterprise coding share, and just passed OpenAI at $30B ARR.

OpenAI

3. OpenAI Unchained

OpenAI spent the week redrawing its biggest cloud relationship and shipping developer infrastructure that puts the frontier conversation back in play.

Multi-cloud freedom: Microsoft and OpenAI amended their partnership, freeing OpenAI to sell across any cloud while Microsoft keeps a license to OpenAI IP through 2032 and revenue share through 2030.

Bedrock landing: Within 24 hours, OpenAI’s frontier models, Codex, and Managed Agents launched in limited preview on Amazon Bedrock, opening every enterprise locked into AWS procurement to OpenAI for the first time.

Agent orchestration play: OpenAI open-sourced Symphony, a spec that turns issue trackers like Linear into control planes for autonomous Codex agents, amassing 15K GitHub stars in days and a reported 500% jump in landed PRs internally.

Alex’s take: A month ago the consensus was Anthropic had run away with developer mindshare. This week continues the tale. The Microsoft amendment pulls OpenAI out of an Azure-shaped box and into every cloud procurement contract on the planet. Add Codex turning into developer infrastructure, on AWS, inside Linear boards, wherever engineers already work, and the frontier race looks a lot less settled than it did a fortnight ago. Let’s wait for the real data to catch up now that this constraint has been removed.

|

Content I Enjoyed

When Labour Becomes a Manufacturing Problem

Figure just took its BotQ humanoid line from 1 robot a day to 1 robot an hour, a 24x throughput jump in 120 days. End-of-line yield is above 80% and improving weekly. The battery line is running at 99.3% first-pass. Each Figure 03 now passes 80+ functional verification tests, including thousands of squats, presses, and jogs before sign-off.

This is humanoid manufacturing starting to behave like commodity electronics. Wright’s Law, the empirical observation that unit cost falls a fixed percentage with every doubling of cumulative production, has held across solar panels, lithium-ion cells, and silicon for decades. A 24x ramp compresses several doublings into a single quarter, which collapses the cost curve.

That hardware curve is meeting collapsing inference costs head-on. Epoch AI’s most recent measurement puts the price of equivalent LLM performance halving roughly every two months, with declines of up to 900x per year on certain benchmarks. The “brain” inside a humanoid is rapidly converging on its energy floor. Hardware and software are reducing to a single output: a unit of physical work whose marginal cost decomposes into amortised steel, inference, and electricity, with no biological constraint anywhere in the stack.

China is running the same experiment from different angles. RobotEra is now deploying its L7 humanoid in the thousands across China Post and SF Express logistics centres, achieving up to 85% of human-level efficiency 24/7. Across a full day, that is roughly 2.5x the output of a single human shift. Unitree just launched a dual-arm humanoid at $4,290, with a 20,000-unit shipment target for 2026 and a starting price already below the annual cost of minimum-wage labour in most OECD countries.

For two centuries, the marginal cost of labour was set by biology and education: how quickly humans could be born, raised, and trained. That floor is being replaced by a manufacturing curve. Every robot rolling off the BotQ line is also a data-collection unit for the next policy version of Helix, which means throughput is now compounding in capability as well as cost.

Idea I Learned

OpenAI’s Own Numbers Are the Problem

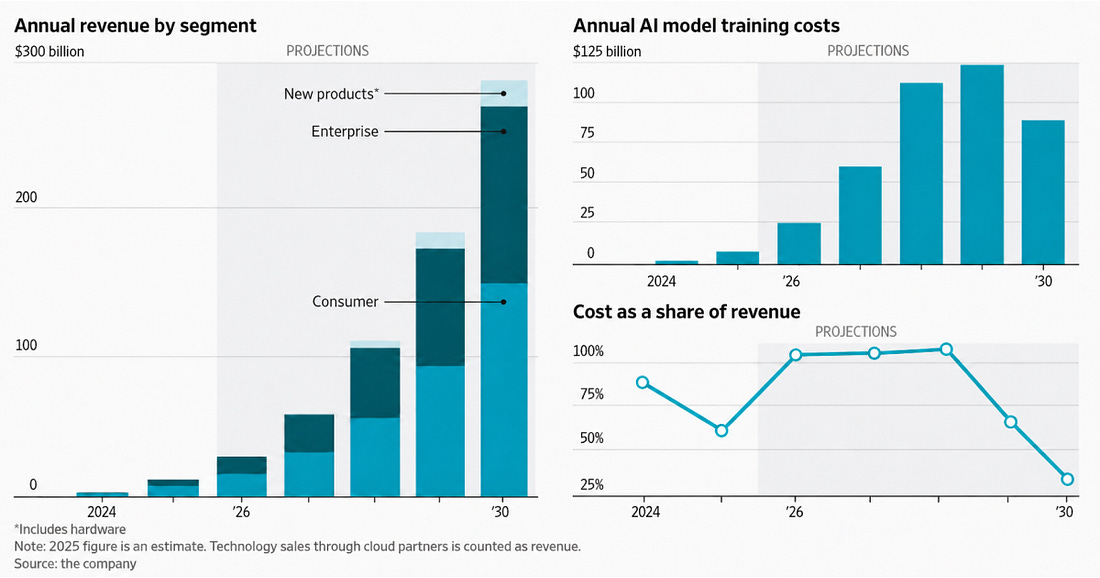

This chart from the Wall Street Journal caught my attention this week. It’s built from OpenAI’s own projections. Training costs alone are forecast to exceed total revenue for three consecutive years from 2026 to 2028, before dropping to roughly 30% of revenue by 2030. That’s an awful lot of faith to ask public market investors to take.

Last October, Sam Altman cited a $1.4 trillion figure for OpenAI’s future compute commitments. CFO Sarah Friar quietly walked the number back to investors at $600 billion through 2030. The WSJ reported last week that she has since told colleagues she’s worried the company can’t pay for those contracts if growth doesn’t accelerate.

Anthropic, on the other hand, is a quieter story. Annualised revenue hit $30 billion in March, ahead of OpenAI’s $25 billion. Eight of the Fortune 10 pay for Claude. Over 1,000 enterprises spend more than $1 million a year on it. Claude Code alone runs at $2.5 billion annualised and holds 54% of the AI coding tool market. Seven of every ten new enterprise customers now pick Anthropic.

So how does this translate to the IPO calendars? Anthropic is targeting an October 2026 listing at a $400–500 billion valuation. OpenAI had been aiming for Q4 2026 too, but Friar has privately suggested waiting until 2027. Internal controls aren’t built out, and revenue is missing targets. Morningstar sees mid-2027 as the realistic window.

OpenAI bet on consumer scale and ate the costs to get there. ChatGPT image generation and Sora 2 drove huge usage spikes in 2025; both have since faded, and Sora 2 is discontinued. GPT-5.5 topped benchmarks at launch but growth has reportedly flattened. Anthropic stayed focused on enterprise, owned the coding category, and kept gross margins healthier despite its own compute strain.

Banks have told both companies that whoever lists first defines the comparable for frontier AI. If Anthropic prices on cleaner unit economics, OpenAI walks in a year later, having to explain why its capital intensity is roughly twice as high for similar revenue, and why three years of its own projection show training costs above the top line.

The “buy everything” compute strategy looked like the playbook in 2024. As we move through 2026 and the idea of going public becomes ever more real, it now looks like a balance sheet problem.

Quote to Share

Alexis Ohanian on Google’s AI compute dominance:

The numbers come from Epoch AI’s latest analysis of global chip ownership, which landed days before Alphabet’s Q1 earnings.

Google Cloud grew 63% last quarter to $20 billion. Azure grew 40%, whilst AWS grew 28%. The cloud backlog nearly doubled in 90 days to $462 billion in contracted future revenue. Google CEO Sundar Pichai told investors Google is “compute constrained” and that cloud revenue would have been higher if they could meet demand.

Now look at the stack. Google owns the chips, the models and the cloud. Everyone else rents at least one layer.

Microsoft pays OpenAI. Amazon pays Anthropic. Both buy Nvidia GPUs. Anthropic runs much of its training on Google TPUs, paying the company whose model it competes with.

Alphabet’s 2026 capex is now $180-190 billion, with 2027 already guided higher. Owning the stack creates Google’s enduring advantage, and it then becomes simple math to determine who will be left standing in a decade’s time once the capex wars have played out.

Source: Alexis Ohanian on X

Question to Ponder

“As AI agents become more capable of seeing and acting across our screens, where do you see the future of human-computer interaction heading?”

Interfaces are about to look very different.

Right now, we type into chat windows, screenshot what’s on our screens, and paste them into Claude or ChatGPT to give it context. That’s an awful lot of friction.

A good example of where things are heading is Clicky. It’s pitched as the simplest interface in the world to talk to AI and spawn agents. It interacts with native Apple Notes, Calendar, and Reminders, builds Mac apps, and runs research for you.

The interesting bit is that it can see your screen.

That single capability removes the friction. It already sees what you see. You speak, and it then guides your cursor. For something like video editing in DaVinci Resolve, where the learning curve is brutal, it’s like having an expert hand-hold you through the workflow.

Now I don’t think the chat window will disappear. For iterative work like drafting a document, going back and forth in a chat still wins.

But for navigating software, voice-first, screen-aware computing changes everything.

The interface of the future is an assistant that watches your screen, listens to your voice, and moves with you through whatever you’re trying to do.

Already a subscriber? Get your whole team on board. Signal Pro group subscriptions give everyone access to weekly AI workflows and tutorials, practical upskilling that pays for itself. It’s the kind of thing L&D budgets were made for. Share this with your manager today.

|

💡 If you enjoyed this issue, share it with a friend.

See you next week,

Alex BanksP.S. ChatGPT just solved a 60-year-old math problem.